Addy Osmani, developer at Google, recently shared a Checklist on optimization for AI agents.

Addy isn’t talking about visibility on LLMS per se. Rather, he evokes an optimization logic for AI agents, or what he calls The Agentic Engine Optimization (AEO).

The concept remains close to Optimization for LLMS, but with a slight shift: we no longer only aim to be visible, but to be Usable, actionable by agents, A bit like WebMCP.

So naturally, I had to talk about it.

Oh, and by the way, let us add one more acronym to the collection: SEO, GEO, CRO… and now AEO. Cool!

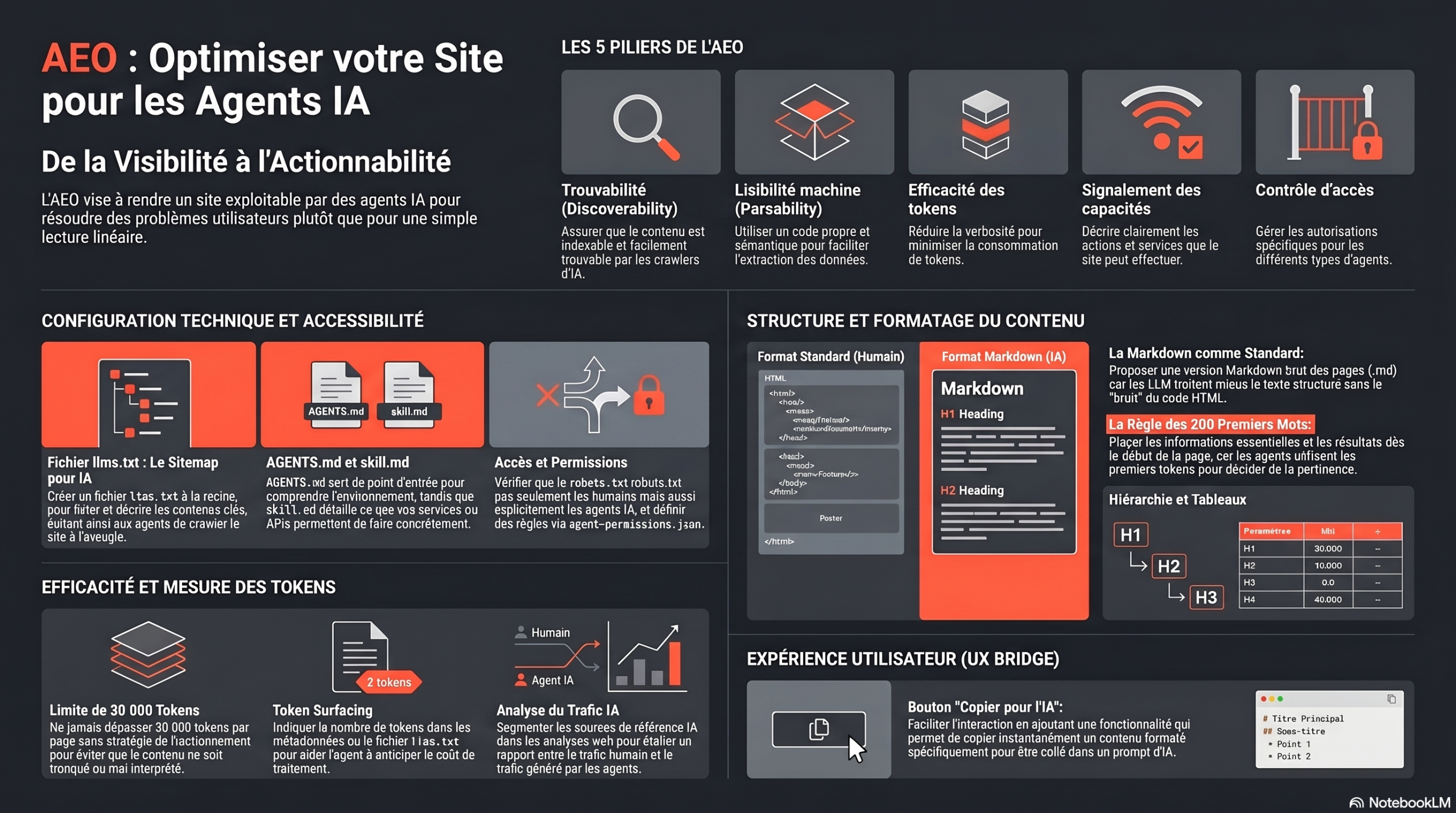

The 5 levels of Optimizations for AI Agents

Addy mentions these 5 levels to optimize your pages for AI agents and make them usable:

- Discoverability

- Parsability (Machine readability)

- Token Efficiency (size)

- Capability Signaling (what your product does)

- Access Control (Robots.txt & Co)

How to optimize for AI agents?

Access – Robots.txt

- check : AIs are allowed on your site? Is the crawl blocked?

- Solution : Explicitly allow AI crawlers to access your site.

Discovery -LLMS.txt

- check : Do you have an LLM.txt?

- Solution : Create an llms.txt file that lists and describes your key contents. It serves as a sitemap for AI that saves them from crawling your site blind.

Capability/Capability Signaling — Skill.md

- check : Do you have a skill.md that describes what your APIs do?

- Solution : Describe what your product or API can do concretely. Agents thus understand directly if your tool responds to the user intent.

Accessibility – Agents.md

- check : Are your pages easily accessible? AIs have pointsentered?

- Solution : Add an Agents.md file to the root of your project. It serves as an entry point for agents to understand your environment and your resources.

Content formatting

- check : Your contents are easily extractable?

- Solution : Offer a clean Markdown version of your pages. LLMs read more structured plain text than HTML filled with noise.

PS: Google has started setting up Markdown pages on their pages. On the other hand, Jhon Mueller explained that, as it was the case for the llm.txt put past year, this is not necessarily a confirmation that the format is effective.

What Google teams do not an endorsement.

Page structure

- Use a coherent and logical H1 → H2 → H3 structure. Agents scan the structure rather than read linearly.

- Place essential information from the beginning of the page. Agents mainly use the first tokens to decide on relevance.

- Avoid unnecessary items (Menus, Footers, Breadcrumbs) in Parsable content. These elements pollute the context and reduce the effectiveness of agents.

- Cut out your content by task or endpoint rather than by massive page. Agents prefer several small relevant blocks over a single heavy document.

- Organize your content by user lens, not by internal structure. Agents are looking to solve a problem, not to explore your site.

- Use tables for settings and paste the code next to the explanations. This improves quick understanding and reduces the cost in tokens.

Surfacing token

- Limit page size and segment content. Too long content may be ignored, truncated, or misinterpreted.

I already said that theOptimization for tokens is the new optimization of crawl budgets. Hey, I was not wrong.

UX Bridge (Copy for AI)

Personally, I provide the summary button with IA on articles for readers. That being said, I don’t just do it for practicality.There is also the matter of manipulating the memory of AIs.

Addy Osmani offers to do the same (sort of) for AIs with the Copy button for IA. that makes it easy to copy paste from your pages.

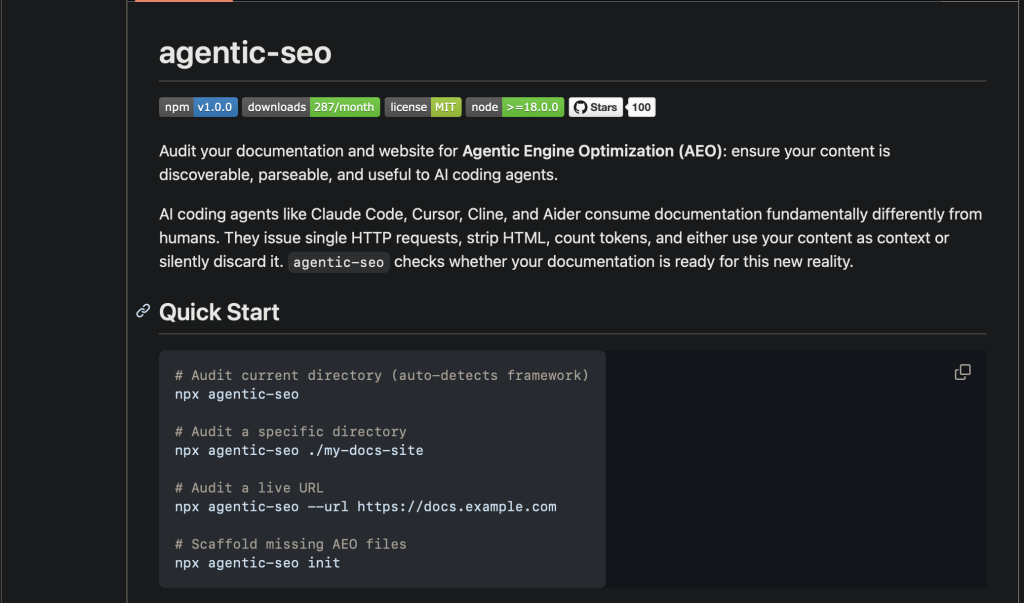

How to do a visibility audit on AI agents?

Addy Osmani proposed a GitHub tool for auditing/having recommendations for theOptimizing your pages for AI agents.

Google Geo checklist and AI agents

If you have to prioritize things, here is whatAccording to Addy Osmani:

- The llms.txt file is present at the root and contains a structured index of all documentation?

- The robots.txt file does not inadvertently block known user-agents from AI agents?

- The agent-permissions.json file defines the rules dAccess for automated customers?

- The Agents.md file is present in the code repositories and returns to the relevant documents?

- The documentation pages are available in the raw Markdown format (and not only in rendered HTML)?

- Each page begins with a clear statement of results in the first 200 words?

- The titles are consistent and hierarchically correct?

- The code examples immediately follow their description in text?

- Parameter references use tables, not nested text?

- The number of tokens is tracked for each documentation page?

- No page exceeds 30,000 tokens without split strategy?

- The number of tokens is indicated in llms.txt for key pages?

- The number of tokens is available as page metadata (META tag or HTTP header)?

- Skill.md files describe what each service/API does, and not just howcall?

- Each skill includes: capabilities, required entries, constraints, links to key documents?

- MCP server available for theDirect integration dagents (if applicable)?

- Segmented AI reference sources in web analytics?

- The logs are in place to detect known HTTP fingerprints of AI agents?

- reference established for the relationship between AI traffic and human traffic?

- “Copy for theAI” available on the documentation pages?

- Markdown source code accessible via a D conventionURL (for example, adding the.MD extension)?

My opinion on the checklist for optimization for AI agents

Well, let’s be honest: it’s not an official Google checklist. Nothing indicates that the Search team has validated all this. We are on Addy Osmani’s vision, not on a product recommendation.

If you are looking for a More complete and visibility-oriented checklist on LLMss, I have prepared a dedicated article with the GEO tools that Jpersonally use.

PS: My tools are a bit atypical. If you are looking instead The GEO tools “Grand Marché”, I also have a selection of the most used.

PPS: They are not necessarily the best known, but they are interesting to watch.

Leave a Reply

You must be logged in to post a comment.