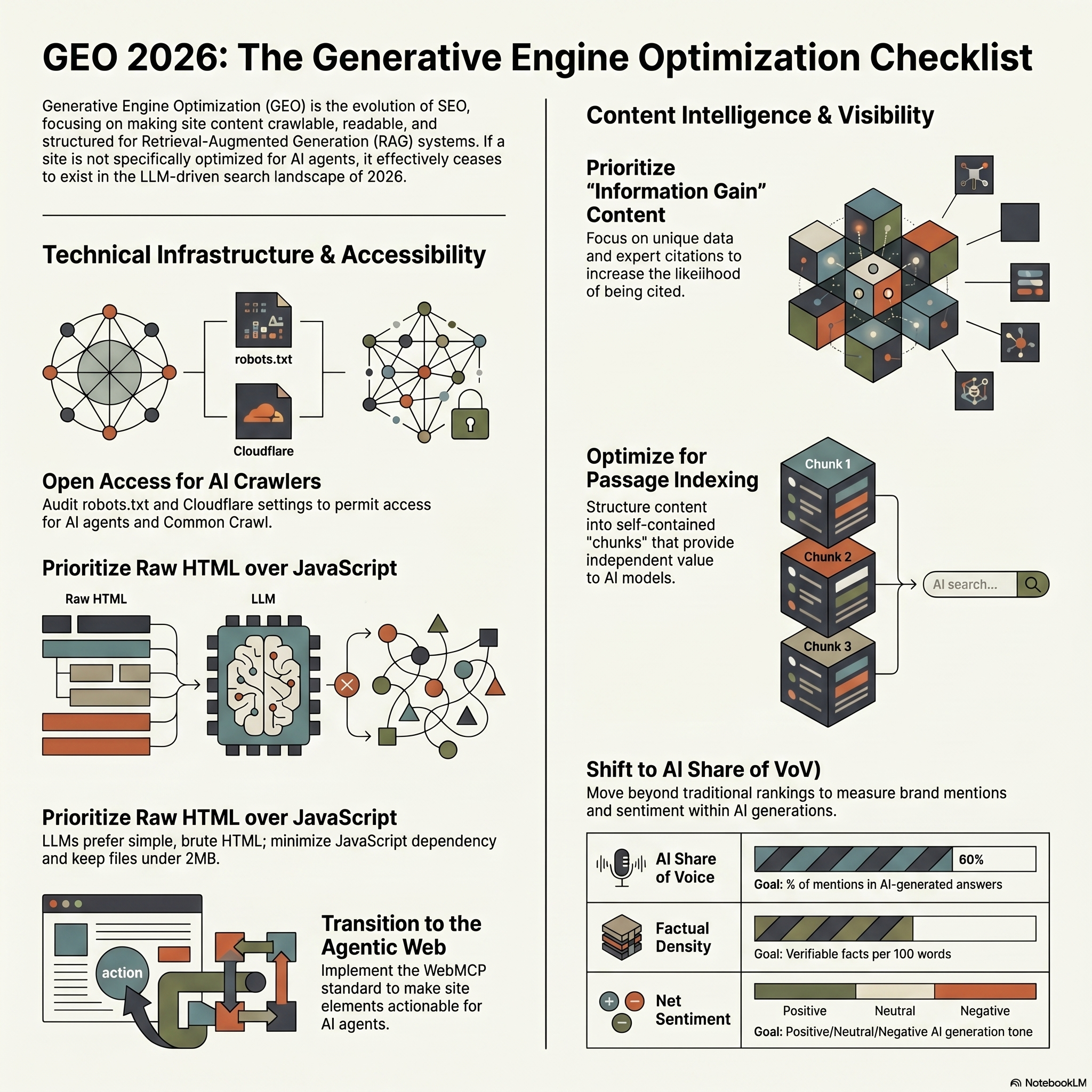

GEO (Generative Engine Optimization) has become essential to appear in LLM responses. Here, no ranking to win: if your site is not crawlable, readable and structured for RAG type systems, it does not exist.

Robots.txt, Structure, Chunks, AI Share of Voice: This checklist covers the essentials to audit your site and make it usable by generative engines.

Technical Geo Checklist

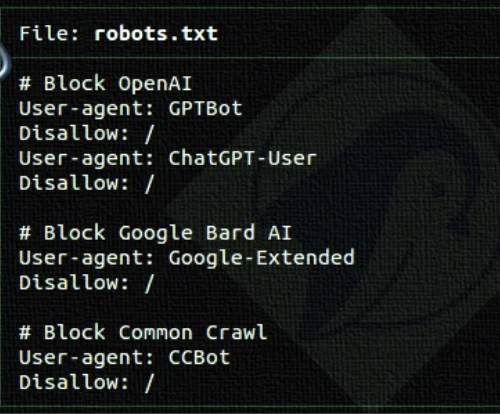

Audit your Robots.txt file to verify access to major AI agents.

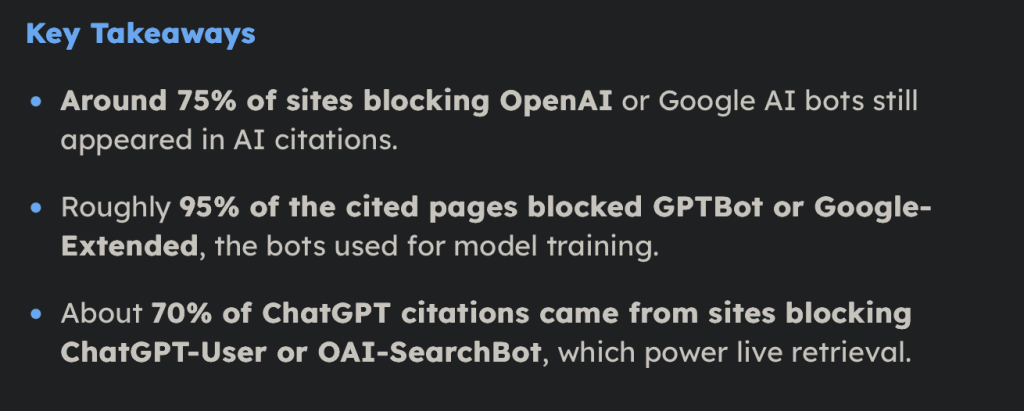

Many sites are still blocking AI crawlers via their robots.txt. Some actors, especially the media, have made this choice in an assumed way.

But if your goal is to appear in the responses generated by AIs, it is recommended to give them access.

Interesting little point: A recent study shows that blocking these crawlers does not guarantee that LLMs will disappear.

For its part, Google is working on solutions to allow sites, in particular the media, to Supervise the use of their content by AIs.

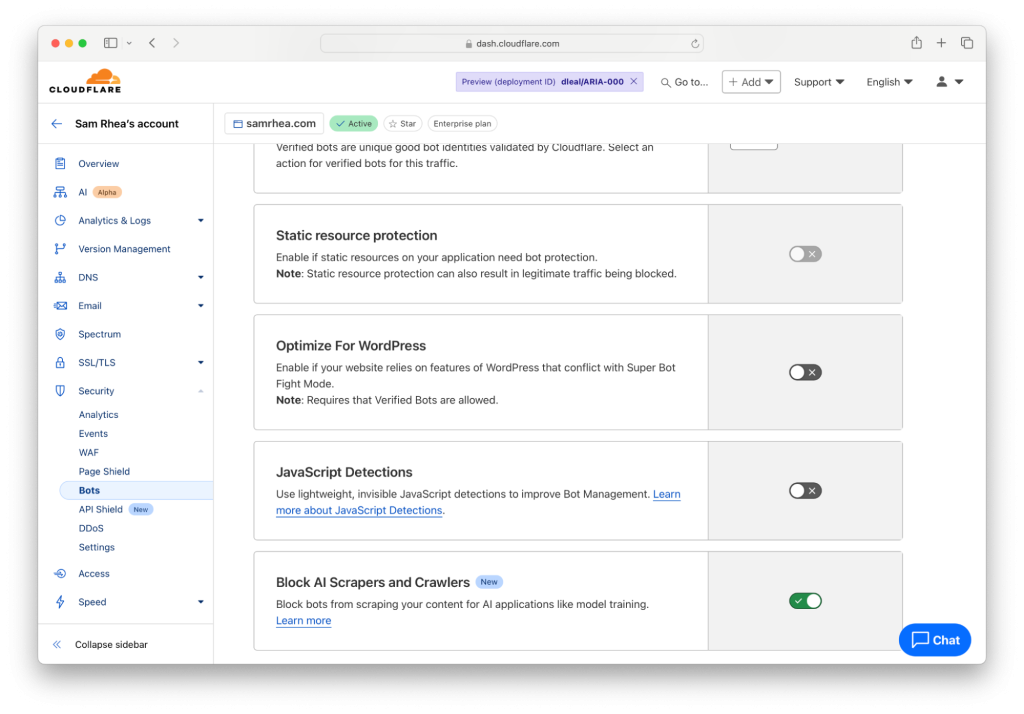

Allow AIs on Cloudflare.

Cloudflare is the CDN used by a large part of the sites. By default, some AI crawlers can be blocked, so remember to check your dashboard.

If you want to appear in responses generated by AIs, make sure they are not blocked on the Cloudflare side.

Allow access to Common Crawl.

Common Crawl is widely used to train LLMs. It is one of the largest databases on the web, updated regularly.

Hence the interest of allowing their bot in your robots.txt, but also on the cloudflare side.

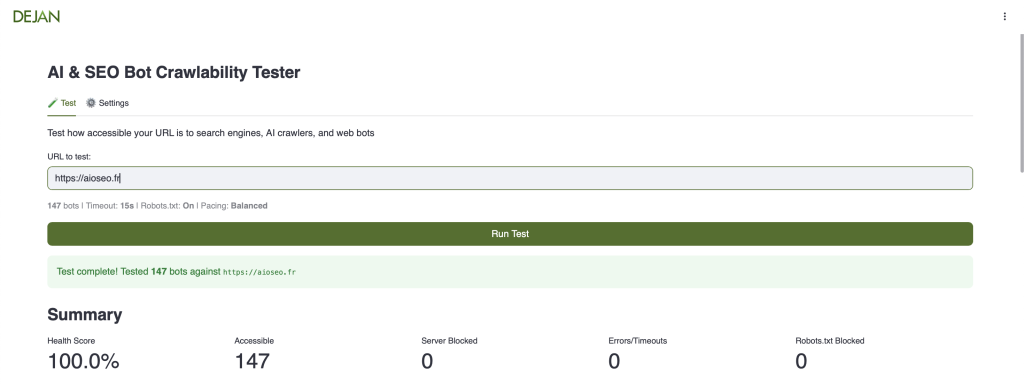

Check the accessibility of your site by AIs.

Dejan.ai offers a practical tool to see if Your site is accessible to crawlers.

To be taken with a grain of salt, but it remains a good starting point.

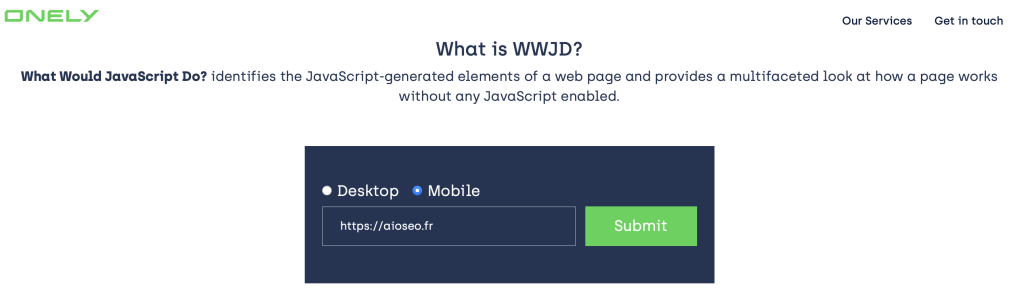

Test your JavaScript dependency.

Google manages JavaScript well thanks to its web rendering service. But LLMs do not yet have this rendering level. They prefer simple, raw HTML.

A simple test: disable JavaScript and see what’s left.

Personally, I use Onely WWJD. It’s fast, free, with a fairly telling comparative view.

Otherwise, you can also disable JS directly in Chrome, or go through the Search Console inspection tool.

In short, all roads lead to Rome. The idea is to understand what AI crawlers actually see.

That said, you can also disable JS in Chrome.

You can also do tests with theSearch Console Tool Inspection, etc.

In short, all the roads lead to Rome. The goal is reallyHave an idea about what AI crawlers are going to see.

Check the size of your HTML files.

Google indicates a limit of about 2 MB per HTML file. Beyond that, some pages may not be properly explored or indexed.

If Google can already encounter limits at this level, we can assume that the other AI and LLM, with often lighter rendering means, will be even more sensitive to this kind of constraint.

Semantic Geo Checklist

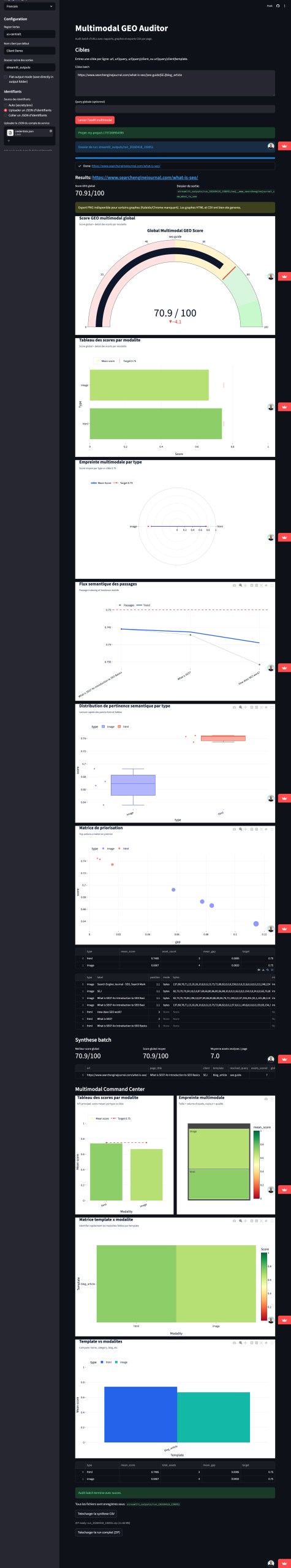

Calculate the score Multimodal Geo of your Templates Pages

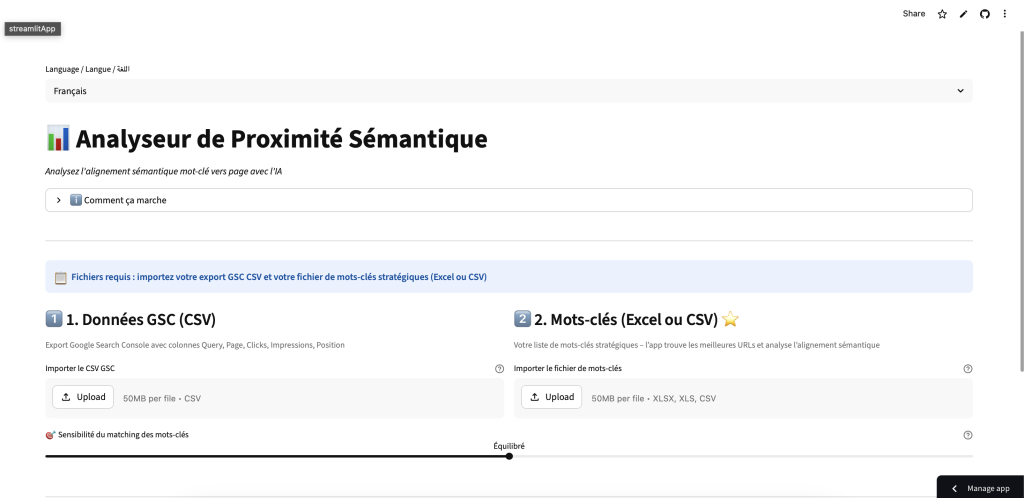

Calculate the semantic proximity between your keywords and your URLs.

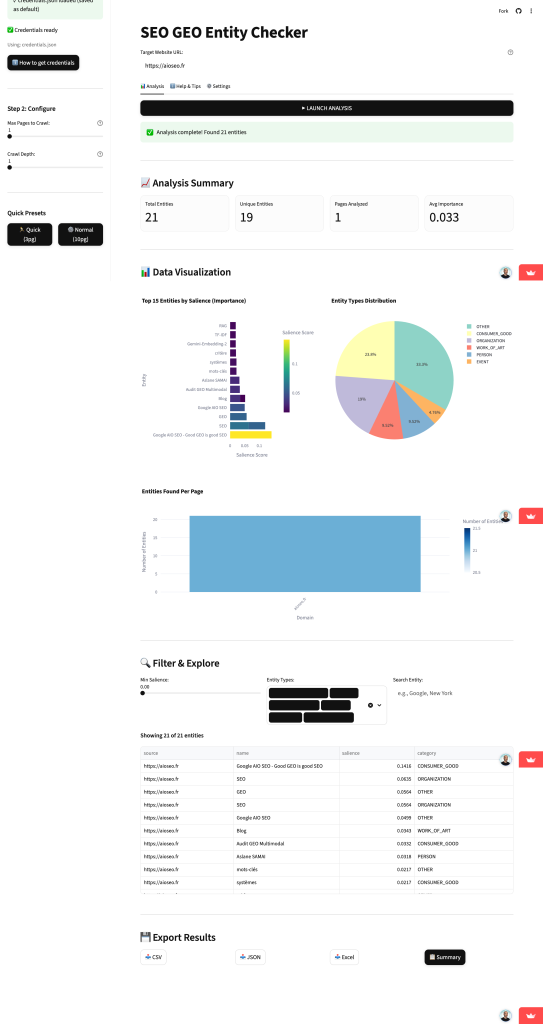

Detect the entities of your pages and improve them

The WebMCP standard and the Web Agentic (Agentic Web)

Understand the ‘Web Model Context Protocol’ which allows you to transform a passive site into a site that can be operated by AA agents (Agentic Commerce).

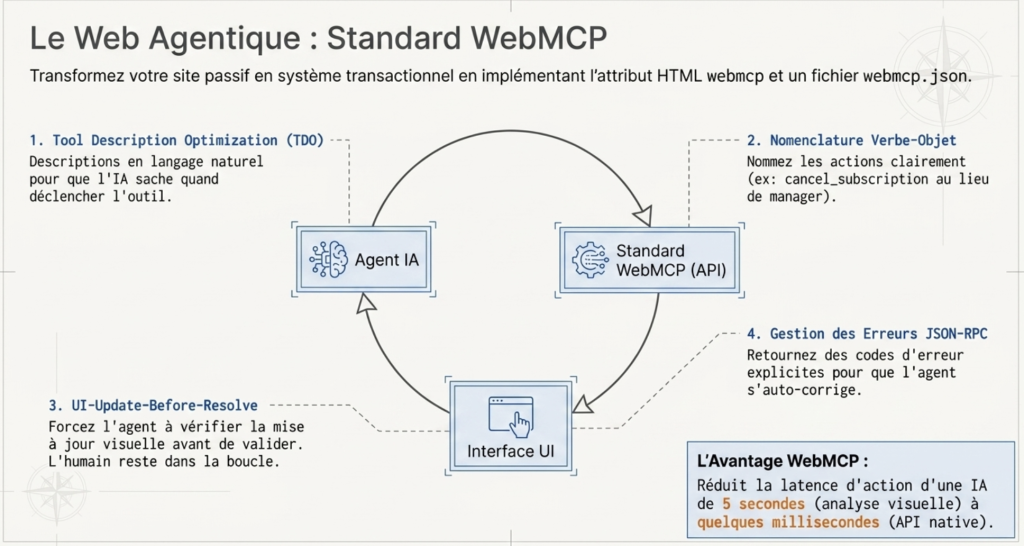

- Implement theHTML attribute

WebMCP: Add this attribute to your key interactive elements (price calculators, booking forms, product search). - Tool Description Optimization (TDO): Write perfect descriptions and in natural language for your web tools so that theAgent knows exactly when and why to trigger them.

- Verb-object nomenclature: Name your WebMCP actions in a clear way for theAI (ex:

Cancel_subscriptioninstead ofa genericmanager). - Limit the scope ofAction: Expose to AI agents only those tools that make sense in theCurrent status of the page to avoid disturbing their ‘reasoning’.

- Opt for theui-update-before-resolve: Force LAgent IA to check that theInterface has been visually updated before considering a successful action.

- Fine error management: Return useful and explicit errors in code (like the JSON-RPC standard) for thatAn agent corrects himself in the event of a bad form submission.

- Create a

webmcp.json: Anticipate theEvolution by hosting this file at the root to declare your site’s transactional capabilities even before the crawl. - Optimize latency: A WebMCP API reduces the time dAction DA 5-second AI (via visual analysis ofscreen) to a few milliseconds, favoring your selection by theagent.lhuman in the loop: Design these tools so thatThey are a visible collaboration:Agent pre-fills the actions, thevalid human. Don’t hide theAutomation.

DOM cleaning

Keep a clean HTML code, avoiding the massive CSS inline that disrupts thedata extraction.

Checklist Geo Content

Bet on “Gain Information” content.

The idea is simple: to propose something that others have not already said.

A different angle, key figures, a new reading, exclusive data… In short, real added value.

It is this type of content that is most likely to be taken over, understood and used by AIs.

Integrate direct quotes fromRecognized experts boosts relevance in societal or explanatory requests of nearly 40%.

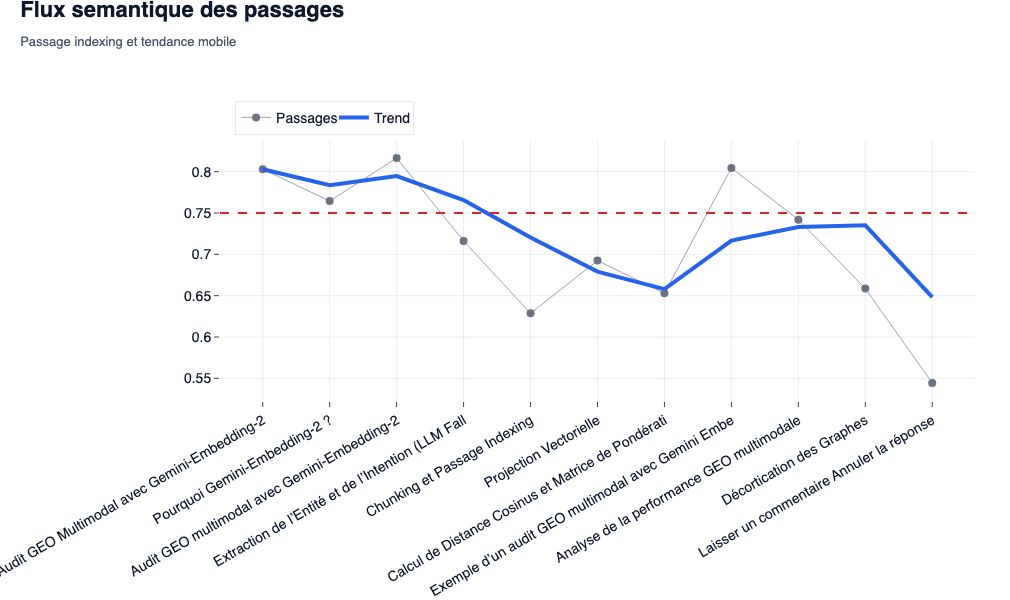

Optimize your chunks for the Indexing passage.

We know that AIs use passage indexing. This was notably confirmed by the CEO of Perplexity.

The idea is simple: optimize your content at the level of the “passage” (text block), not only at the level of the entire page.

Each section must be able to be self-sufficient and be understood independently.

Here is an example obtained with theMultimodal GEO listener mentioned above.

We no longer only look at a page as a single block, but as a sequence of independent segments with their own semantic coherence.

We see here that certain types of content (chunking, embeddings, multimodal analysis) have a more stable impact than others, with an overall trend that varies according to the structure of the passages.

This is exactly what AIs are trying to extract: stand-alone information blocks, rather than an entire document.

Omnichannel Presence (Social & Forums)

lAI massively source its human responses on Reddit, Quora and LinkedIn. Make sure tothere is a legitimate brand presence

Digital PR (Digital PR)

lObtaining verbatim quotes by your internal experts in the press is theone of the signals dmost powerful authority for grounding.

Work on structured data

- Deploy the diagrams

organization,personorproductTo turn your brand into a permanent ‘node’ of the Knowledge Graph. - Use the property

saddlesTo validate your entity by mathematically linking it to databases DExternal Authority (Wikidata, Crunchbase, LinkedIn). - Assign persistent identifiers (

@id) To link the mentions ofAn entity through multiple web pages without having to redefine it. - Maintain brand name cohesion on thewhole web to avoid a ‘structural degradation’ (structural decay) by theAI.

- Use the diagram

howtoTo structure the procedures in 3 to 7 steps, a format particularly preferred by AI Overviews.

Data Freshness (Recency BIAS)

- Update your guides very regularly; lAI suffers froma recency bias and prefers to cite themost recent information.

- inquire

DatePublishedandDateModified: The freshness of the data is vital and non-negotiable to be selected by the LLMS.

- Validate theExpertise of theAuthor (

authority) and theeditor (publisher) in the diagram to send clear E-E-A-T signals.

Geo Friendly Template

- Formulate your H2/H3 headers as natural language queries, such asThey would be typed in a prompt.

- Place the direct and complete response immediately under its header (ideally in the first 150 words).

- Implement ‘tl;dr’ summaries at the top of your long content to prepare lExtraction (Answer Priming).

- Use bulleted or numbered lists, as well as tables, to maximize theExtractability of data byAI.

- Increase the ‘factual density’ (facts verifiable by 100 words) to satisfy the strict anti-hallucination protocols of the models.

- Deploy a strategy to ‘Query Fan-Out‘ by covering theSet of latent questions (sub-queries) related to your main topic to anticipate synthesis behavior.

Checklist Geo Off Page

- Launch ‘unrelated mentions’ claim campaigns by asking for a backlink where your brand is quoted textually.

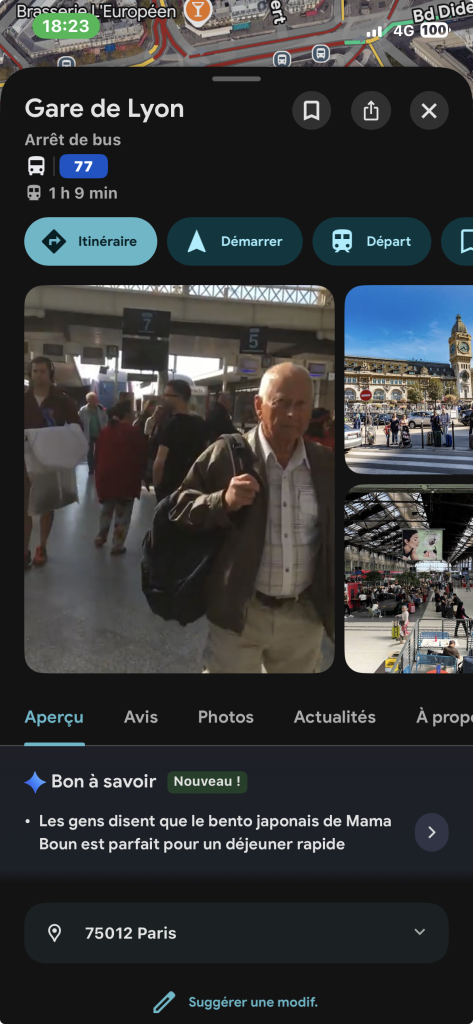

- Make sure your business information is consistent on external platforms like Yelp, Google Business or LinkedIn.

We already see Google testing AI summaries in Google Business Profile. And in some cases, these summaries are even based on external sources.

For the moment, it is still in beta, so the relevance remains variable. But the direction is quite clear: it will continue to improve and generalize.

- Integrate real trust proofs (customer logos, case studies, certifications) directly on your site to validate your D statusreliable entity.

- stimulate the creation ofdetailed customer reviews, because this type of content offers the contextual freshness often sought by theAI.

- Monitor and respond to negative reviews that can be synthesized and influence the overall perception ofan LLM.

Geo checklist Visibility tracking

Analyze Voice IA (AI Share of Voice)

- Stop tracking only the classic SEO position. Measure the percentage dAppearance of your brand in AI responses to your competitors.

Track visibility score (LLM Visibility)

- use AI visibility tracking tools To monitor if theIA cites your brand as source ofAuthority.

Net feeling tracking

- Analyze if theAI speaks of your entity in a positive, neutral or negative way in its generations.