march 9, 2026

WebMCP, what is it ?

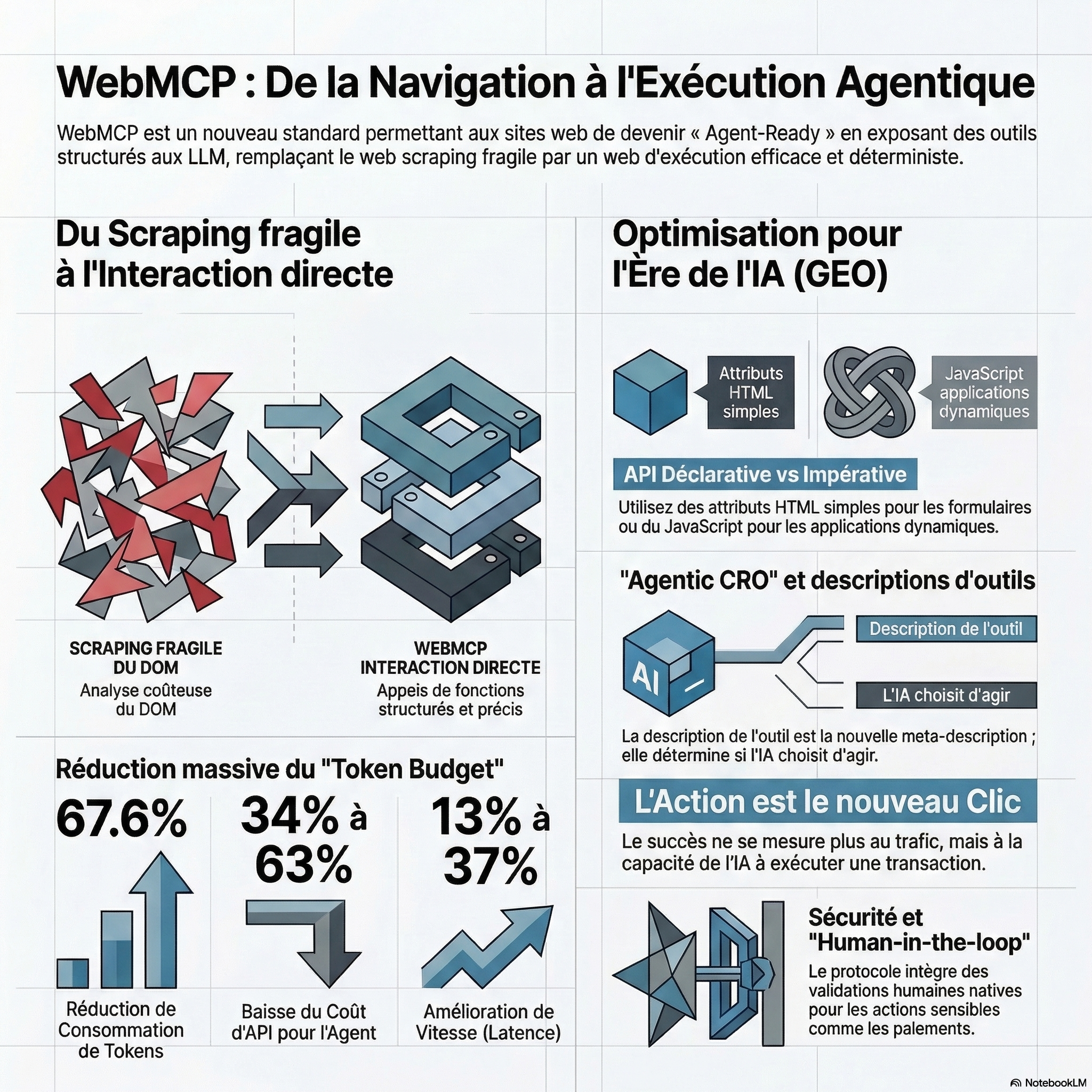

WebMCP is a standardized protocol (proposed by engineers from Google and Microsoft to the W3C) that allows a web site to expose structured tools directly in the browser. Instead of scraper, the AI calls a specific function (ex: book_flight ) with typed parameters.

Why the WebMCP is important ?

The WebMCP emerges as a solution to help the LLMs with capacities film has to fully understand the content of your website and give them the ability to take specific actions ex fill-in forms.

If you ask me my opinion, the WebMCP is the successor of structured data.

Some SEOS say that the web is undergoing its greatest transformation since the introduction of JavaScript and structured data.

The limits of Web Scraping, and the DOM

Today, for an agent AI (as ChatGPT, Claude, or Gemini) to interact with a web site, he has to act like a human. It uses two approaches imperfect :

- The visual analysis (Screenshots) : The agent takes a snapshot of the screen and uses a model of vision to guess where to click. This method is slow, expensive, tokens, and very fragile.

- The analysis of the DOM : The agent reads the raw HTML code of the page. With modern apps (React, Angular), the DOM is filled with tags and complex CSS classes unreadable that do not describe possible actions, which forces the AI to do a ‘ reverse-engineering ‘.

As a result, a simple process can consume between 10 000 and 100 000 tokens and fail to even the slightest change to the interface.

How WebMCP optimises your site for LLM ?

The protocol Webmcp pay sites ‘ Agent Ready ‘.

Specifically, WebMCP eliminates the need for the LLMs to parse the Dom and the screenshots of a site to understand the contents of a site. The website specifically stated to the officer : ‘Here’s what I can do, here are the parameters I need, and here’s how to use me ‘.

By providing a graph of interaction structured, WebMCP allows to reduce the use of tokens to about 65 % on average (up to 78.6 % for e-commerce) and to reduce the cost of the API LLM from 34 to 63 %.

How does the WebMCP ?

WebMCP can be used in two ways !

WebMCP with the API is declarative (simpler solution)

Ideal for static forms, and e-commerce base. It uses special HTML attributes.

For sites that already have HTML forms, the API is declarative transforms these into tools that are understandable by the AI, with a minimum of effort. It is enough to add attributes that are specific to the

- toolname : the name of The tool (ex: ‘searchFlights ‘).

- tooldescription : The natural language description of what the tool is doing.

- toolautosubmit : (Optional) Indicates whether the form should be submitted automatically by the agent, or if it requires human validation.

Step 1 : Enable the media browser To test locally, use Chrome Canary (version 146+) and enable the flag : chrome://flags/#enable-webmcp-testing .

Step 2 : Annotate the tags

The browser automatically translates the form fields in a schema JSON that the agent AI can read and fill out.

<form toolname="book_flight" tooldescription="Réserver un vol commercial"> <input name="origin" toolparamdescription="Code aéroport de départ au format IATA (ex: CDG)"> <input name="destination" toolparamdescription="Code aéroport d'arrivée au format IATA"> <input name="date" type="date"> <button type="submit">Rechercher</button> </form> WebMCP with the API Imperative (more complete)

For dynamic web applications (SPA), whose state is constantly changing, the API imperative uses the JavaScript via the navigator.modelContext.registerTool() .

You define a name, a description, a diagram of the input JSON and function execute (manager of the action). The major advantage is that the tools can be saved or deleted dynamically according to the state of the page (for example, a payment tool is only displayed if the cart is not empty).

Step 1 : Define the schema to JSON, The agent AI is in need of a strict contract.

Step 2 : Register the tool via navigator.modelContext The tool is stored in the global object in the browser, detailing its name, its description and its function execute ( execute ).

if ('modelContext' in navigator) { navigator.modelContext.registerTool({ name: "add_item_to_cart", description: "Ajoute un produit spécifique au panier de l'utilisateur via son ID", inputSchema: { type: "object", properties: { productId: { type: "string", description: "L'identifiant unique du produit" }, quantity: { type: "number", minimum: 1 } }, required: ["productId", "quantity"] }, async execute({ productId, quantity }) { const response = await fetch('/api/cart/add', { method: 'POST', body: JSON.stringify({ productId, quantity }) }); return response.json(); // Retour structuré pour l'agent } }); } How to test WebMCP today ?

The standard is in draft version, but you can start to experiment :

- Download Chrome Canary.

2. Enable the flag chrome://flags/#enable-webmcp-testing .

3. Use open-source libraries such as MCP-B ( @mcp-b/global), which acts as a polyfill for the integration of the API W3C any browser and the bridge with the clients, IA exist.

4. Install an extension Model Context Tool as. You’re spoilt for choice.

- WebMCP – Model Context Tool Inspector (official expansion)

- Browser MCP

- WebMCP Ready Checker

For the extension agent to work, you need anAPI key Gemini. can be obtained free of charge for testing purposes.

To do this, simply create a new project in Google AI Studio, and then click on ‘ Create API key ‘.

This API key will then be used in the extension.

It is also possible to test it via this web site is made available for this purpose. If everything works correctly, we should see that the tool is detected and that it can be invoked directly from the extension.

Impact of WebMCP on the GEO and SEO

The research carried out, including stacks, WordPress and e-commerce, indicate that the adoption of the standard WebMCP improves the efficiency of interactions with the LLM : reduction of the consumption of tokens, reduction of the costs of APIS and acceleration of the recovery time of the responses.

| A Key Performance indicator (KPI) | Impact WebMCP |

| Consumption of Tokens | Reduction of 67.6% in average. |

| Cost of APIS for Agent | Decrease of 34 % to 63 %. |

| Quality answers | Maintained at a 97.9 % (compared to 98.8 % in the manual). |

| Speed (Latency) | Improvement of 13 % to 37% in complex flows. |

The GEO of the future is no longer restricted to be cited by a LLM ; it will act on the ability of a website to be usable by an agent generative.

If a user requests to its AI ‘ book the cheapest flight to Paris ‘, the sites optimized for SEO / GEO with tools WebMCP reliable capture this traffic, while the other will not exist, just not in the space of a decision of the agent.

- The description is the new meta description.

Use action verbs and precise descriptions of the positive.

The quality of the description of your tool that will dictate if a language model (LLM) decides to use.

- The design of the Schema, JSON is the data structured.

Design clear diagrams, accept raw input from users (without forcing the AI to do the calculations) and return errors descriptive for a conversion rate agentique high.

- The découvrabilité tools is the new indexing.

The agents need to know what tools are available before you even visit the page.

- The ‘Agentic CRO’ (Conversion Rate Optimization).

the optimization of the names and descriptions of tools becomes crucial. If an AI does not understand the usefulness of a tool, or if the schema is too complex, she will choose the tool from a competitor.+1

- Massive reduction in costs for the LLM

The tests show an average reduction of 65% to 78% of the tokens (tokens) consumed by the agents, which makes your site more economically attractive platforms for AI.+2

- Reliability and determinism.

By providing a contract of interaction is clear, you eliminate the hallucinations of the AI during the navigation.

- Verb-First Naming

To maximize the ranking GEO (understanding by the LLM), the tools must follow a nomenclature strict : action_objet (ex: cancel_subscription is superior to subscription_manager ).

- UI-Update-Before-Resolve

A good practice WebMCP is to update the DOM visual before the promise in JavaScript, the tool is resolved. This allows the agent to ‘ see ‘ the change via its vision capabilities if necessary, doubling the audit.

- Offline Agents (PWA).

A PWA can declare tools WebMCP via its manifest. This allows the host system (OS) to run the application and navigate to the specific page, even if it is not open, turning a web site into a ‘plugin’ system permanent.

- Discovery.

For the moment, the agent should load the page in order to learn the tools. The SEO experts (Barry Schwartz, Glenn Gabe) are already anticipating a file webmcp.json at the root, or an extension of the robots.txt / sitemap.xml to declare the capabilities of the site before the crawl.

- Trust Time Limits.

The browser may impose time limits for permissions that are granted to an officer, forcing him to re-authenticate the action of the AI regularly.

WebMCP : the case of use of

What is interesting with WebMCP, is that it moves from a web site, where the AI are happy to reply… to a website, where it can act directly.

In scenarios B2C, the agent may, for example, compare the prices between several e-retailers in a few seconds via a tool such as search_products. It can also go a step further and perform an action, such as booking a restaurant or a flight directly from the site, without having to go through an aggregator.

Side B2B, the use cases become even more interesting. One can imagine an agent who helps a buyer to manage its sourcing or logistics, by automatically sending requests for quotes (RFQ) to multiple service providers through a standardized tool, such as request_quote.

What really changes here is that the tools are composable.

An agent can connect several actions on different services in the same session browser.

Result : pass gradually from web browsing to a web runtime, where the agent may handle a task, end-to-end, with a lot less friction to the user.

The change of paradigm : the Crawl Budget to the Token Budget

In SEO classic technique, it speaks to optimize the Crawl Budget.

In a context or the WebMCP is democratized, we will also Token Budget , or what some are already starting to call the tokenomics of the web.

Why ? Because an agent AI does not interact with a site as a crawler classic. It does not pass through the DOM.

The webMCP therefore introduces a new optimization : reduce the cost of cognitive and tokenisé of the interaction with the web.

Human in the loop

The standard WebMCP introduced a central object : the ModelContextClient.

It allows you to structure the interaction between agents AI, the browser and the web services.

- requestUserInteraction() : this function introduces a native mechanism of Human-in-the-Loop.

The AI may temporarily suspend its execution in order to seek a human validation in the browser. For example : ‘ do you really Want to confirm that payment of € 450 ? ‘.

- Composability : the tools are designed for atomic and interoperable.

An agent can combine multiple actions from different services in the same session browser, for example, to use a tool search_flights on a site, and then book_hotel on a site B.

Security and Trust Boundaries ‘

In contrast to the scraping which is ‘ blind ‘, WebMCP is governed by strict rules :

- Same-Origin Policy (SOP) : An agent may not use the tools of a site to attack another tab.

- Legacy-Auth : It is the strong point identified by Reddit r/webdev. As the code runs on the client side, the agent uses session cookies to existing. ‘t need to manage API keys for complex or flows OAuth time-consuming for the end user.

- JWE (JSON Web Encryption) : Ability to encrypt the responses of the tool so that only the agent can read the sensitive data (PII).

Conclusion : my opinion on the WebMCP

The protocol WebMCP is not a simple evolution of web mark-up, as could be the meta tags or in Schema.org. It is not a buzz as the LLM.txt for example.

Why ? Because Microsoft and Google, the bear. The last time that the two actors are set to accort on a standard, we had the data structures that have become a pillar of SEO Tech and page.

It marks rather a deeper transformation of the infrastructure of the web.

For decades, the web has been thought of as a network of information that is readable by humans.

What is emerging today is different : a web where agents can directly execute actions.

As emphasized by Dan Petrovic (Dejan AI), we may be in the process of seeing a second layer of the web, designed not for browsers humans, but to machines.

In this context, the role of SEO, and more broadly the GEO is also changing.

The goal is not only to set a URL in a SERP, but potentially place an executable function in the chain of decision-making LLM.

The optimization semantics remains important, of course, but it begins to give way to something new : the engineering interface agentique.

That said, the adoption of such a standard will need to be prudent.

WebMCP is still in Early Preview in Chrome (146+), and exposure tools directly through the browser requires a level of rigor is high, particularly on the aspects of security.

The patterns must be validated strictly client-side (for example via Zod) and server-side to limit the attacks as the prompt injection.

In the same way, some sensitive interactions such as payments, the critical data, applications, YMYL must go through mechanisms of Human-in-the-Loop, in particular through the API requestUserInteraction().

If these safeguards are met, one thing seems pretty clear :

the platforms that will build now and architectures agent-ready, robust and deterministic, will have a length ahead.

Because the traffic of tomorrow may be only a click.

It could become the direct action.

A web of Zero-Click… but transactional.

And in this new paradigm, the sites that will only designed for a web eye may gradually become invisible to the ecosystem of the assistants to autonomous.

Leave a Reply