All the tools that I present here are available directly via my page Streamlit : https://share.streamlit.io/user/aslan04739

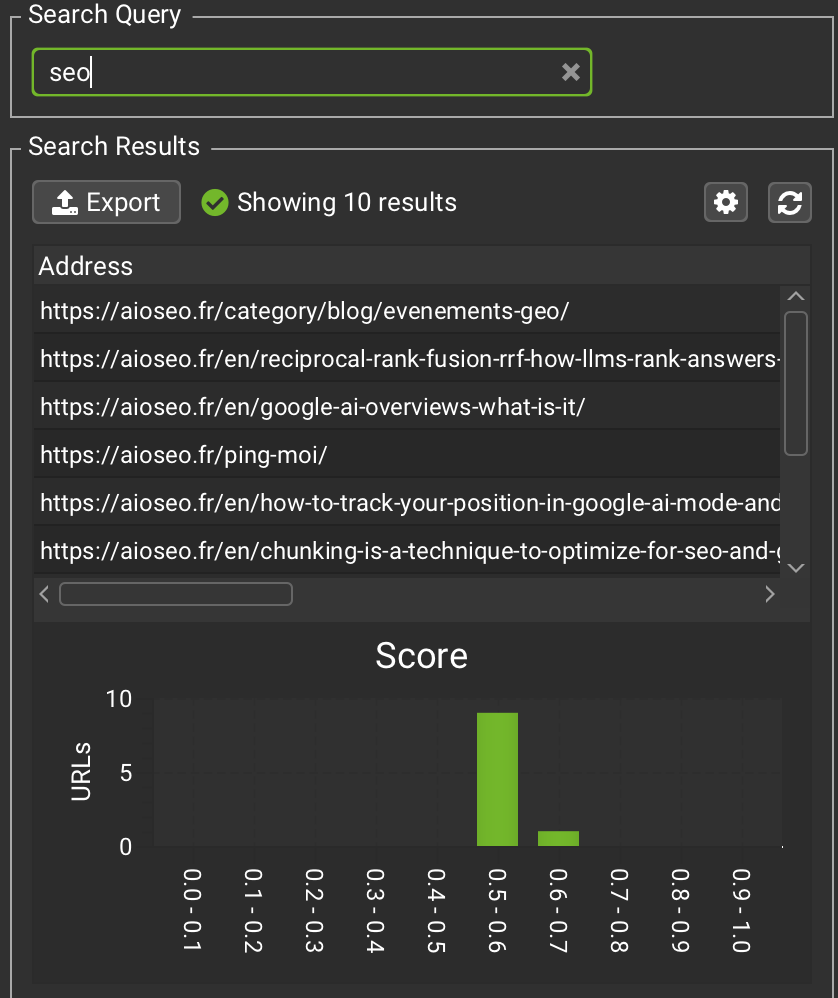

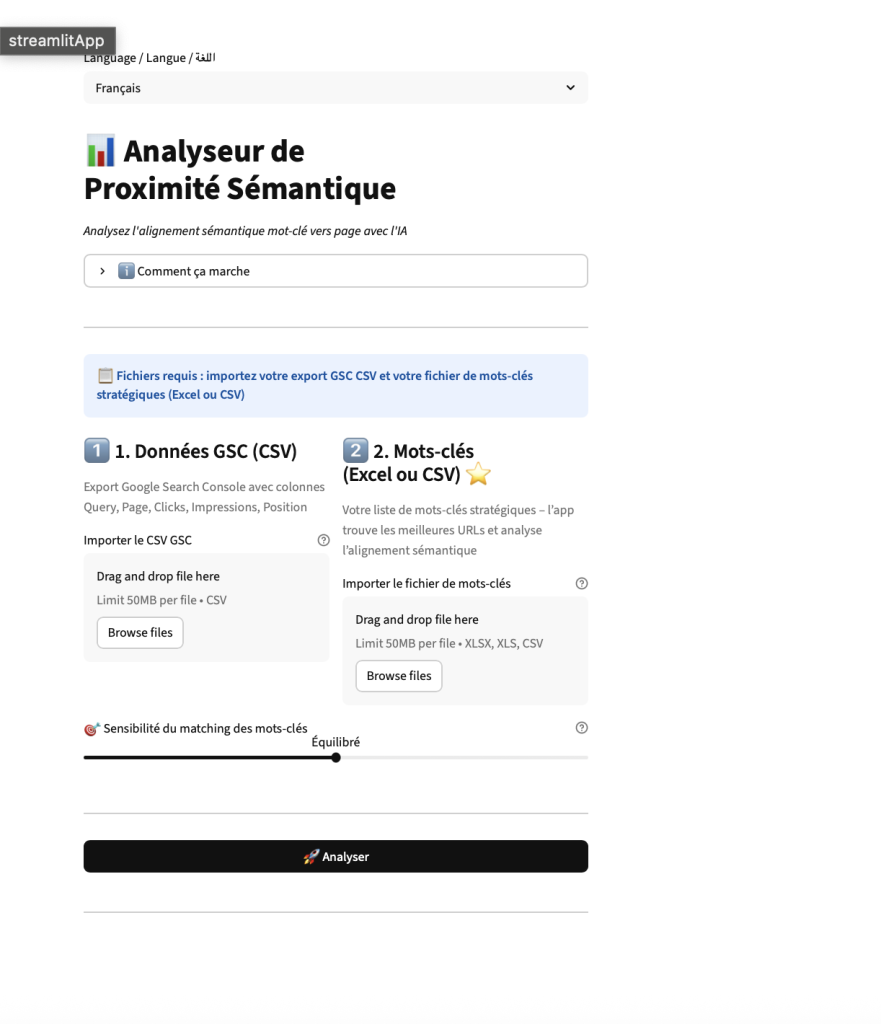

Bulk Analysis of semantic proximity

If you’re a GEO or the SEO semantic, you analyze normally the semantic proximity between your requests and your URLs.

I mean… I hope.

On Screaming Frog, you can do this check. The problem : it is one-by-one. , And impossible to easily compare your URLs with those of a competitor.

So, I coded a first tool to solve this problem,. A tool to make a calculation of semantic relatedness VS. that of a competitor of your choice.

And then I had another need : to do it in bulk.

So I coded a second tool.

The method is different, but the goal remains the same : to find out if Google combines the good pages to the right queries.

How does the Bulk Analysis of semantic proximity ?

The process is simple.

- You have a sheet / Excel / CSV in your words-priority key (if you don’t have one, start there).

- You can export your data in Google Search Console with the filter query + page. Personally, I use the extension Search Analytics for Sheets. Indispensable.

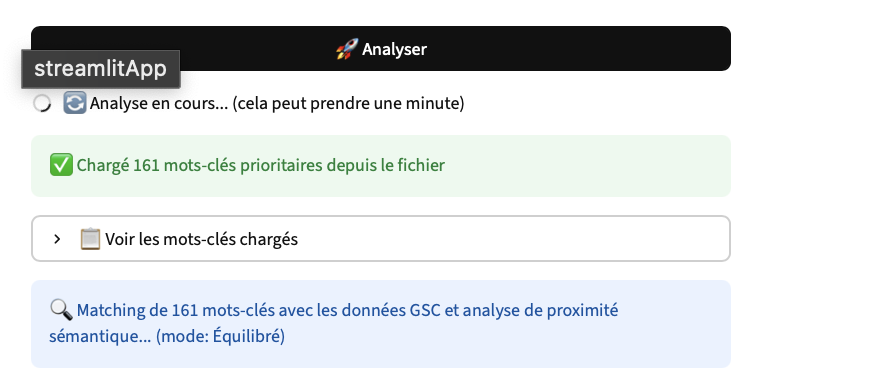

- You import the two files into the tool.

And you get :

- the URLs that rankent for your queries

- the score of semantic relatedness for each URL

The possible scenarios

After theanalysis, you two scenarios :

The tool arrives to fetch your URLs.

In this case, we will analyze :

- the slug

- the tags

- and especially the content of the page

This is where the score of semantic relatedness becomes really relevant.

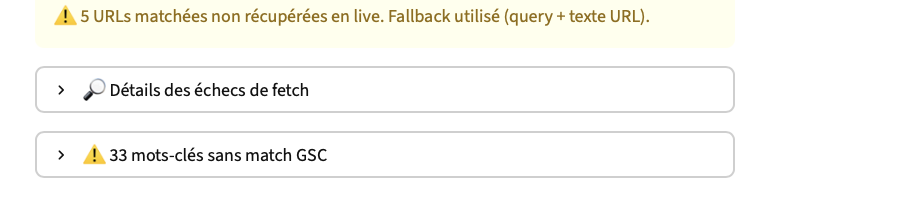

Otherwise, it happens that the tool may not be able to retrieve the page (block, timeout, JS, etc).

In this case, it is based only on a comparison between the URL and the query.

This is clearly not ideal, but it can still be a first signal.

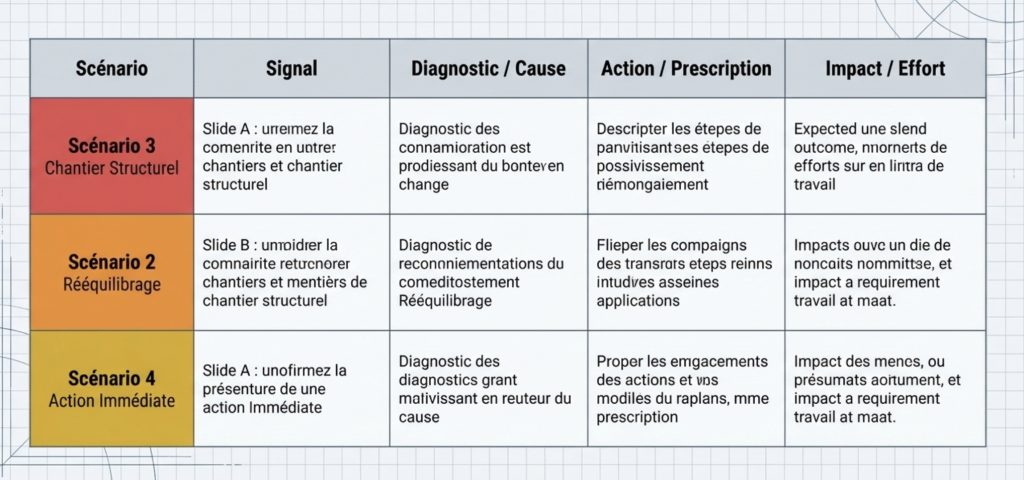

When the tool arrives to fetch URLs, several scenarios can occur.

1. The good URLs rank and the scores are good

Nothing to report.

Google understands your page and matches them correctly to the query.

2. The good URLs rank but the scores are low

There are probably a work of on-page optimization to make : content, tags, semantic structure.

3. The bad URLs rank

Typically the home or a generic page.

Often a problem of relevance semantics on the target page.

4. None of your URLs relevant rank

In this case, the problem goes beyond semantics : authority, structure, site, search intent poorly covered, etc

In short, the score is not just to look pretty.

It also helps to understand why Google associate (or not) a page to a query.

SEO Entity Analyzer

The manual analysis of the entities is inefficient to scale. I developed this tool to automate the audit semantics via the API GPT.

Working

The tool intersects the data of your URLs with a model JSON via the API to extract and verify the presence and the relevance of the entities instantly.

Features

- Single URL : Audit of entities immediate.

- Bulk Mode : Import list of URLs with control of the depth (depth).

Why use the monitor feature to the SEO consultants & GEO ?

- Alignment LLM : engines generative (ChatGPT, Google SGE/AIO) use the Knowledge Graph, not by words. Using the API allows you to audit your content with the same logic as for the engines, which the judge.

- Entity Salience & Disambiguation : Check to see if your key entities are correctly detected and disambiguïsées to maximize your chances of citation in the responses generated by AI (GEO).

- Topical Authority to the scale : The audit manual does not validate the consistency semantics of a whole cluster. The bulk mode ensures that your mesh entities is solid on the whole structure, not just a page.

Free tool, developed for my own needs of consulting. Try it !

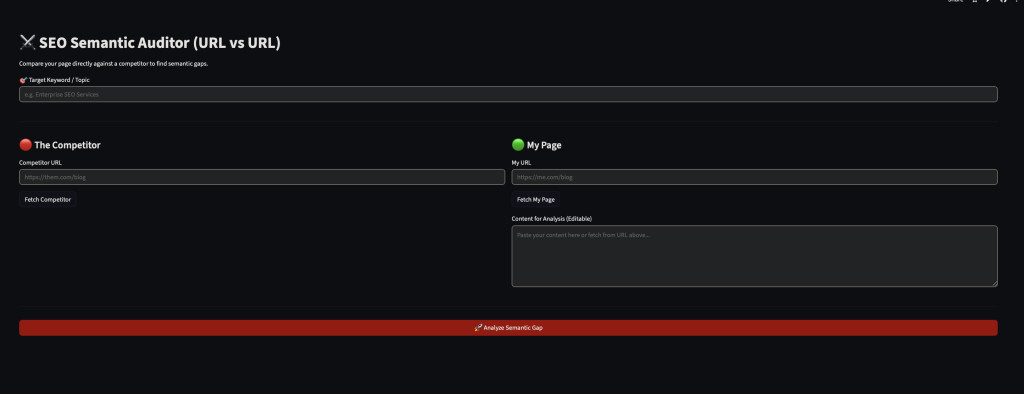

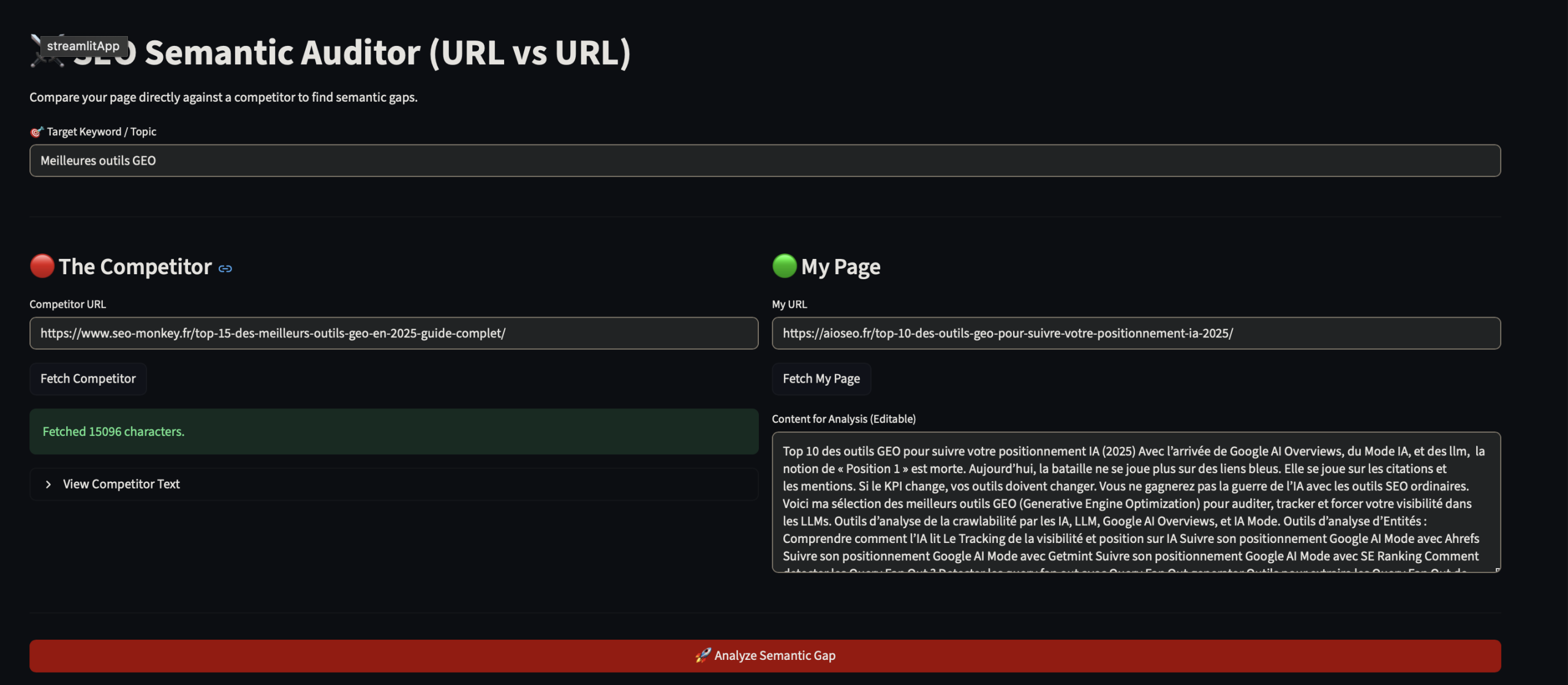

Semantic SEO analyzer (URL vs URL)

Why a page ranke in front of yours ? The answer no longer lies only in backlinks or keyword density. This is the conventional engines (Google RankBrain/BERT) or the engines generative (Gemini, Perplexity, ChatGPT Search), they now share the same fundamental logic : the proximity vector.

They are not the ‘ word matching, they plan your content in a semantic space multi-dimensional. If your vector is too far removed from that of the query, you don’t exist for the retriever of the LLM, or the ranking of Google.

I developed this tool to audit this distance exact mathematical.

The Stack

The tool is based on :

- Vectorization (

all-mpnet-base-v2) : The model SOTA’s currentaward-transformers. It encodes the profound context of your page to simulate the understanding of a modern engine. - Extraction (

KeyBERT) : To isolate entities and n-grams, which are the backbone of the semantic content competitor.

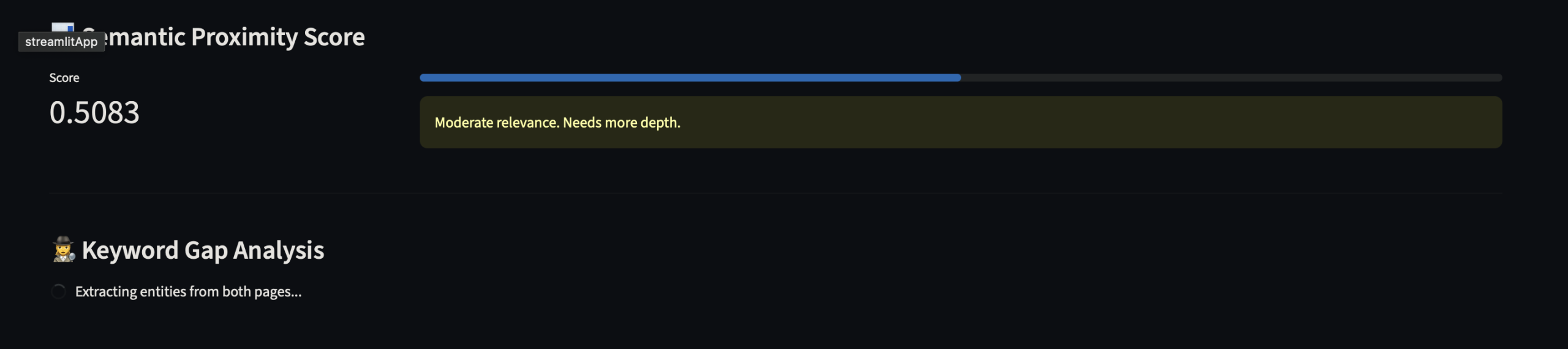

The KPIS of the semantic auditor

1. Semantic Proximity Score (Distance Cosine) This is the gross measure of your alignment with the search intent (the ‘prompt’ of the user).

- For SEO (Google) : A high score indicates that you respond to the intent latent.

- For the GEO (LLMs) : This is crucial for the RAG (Retrieval-Augmented Generation). Most of your closeness is strong, the more chances you have of being selected as a source in a response that is generated by AI.

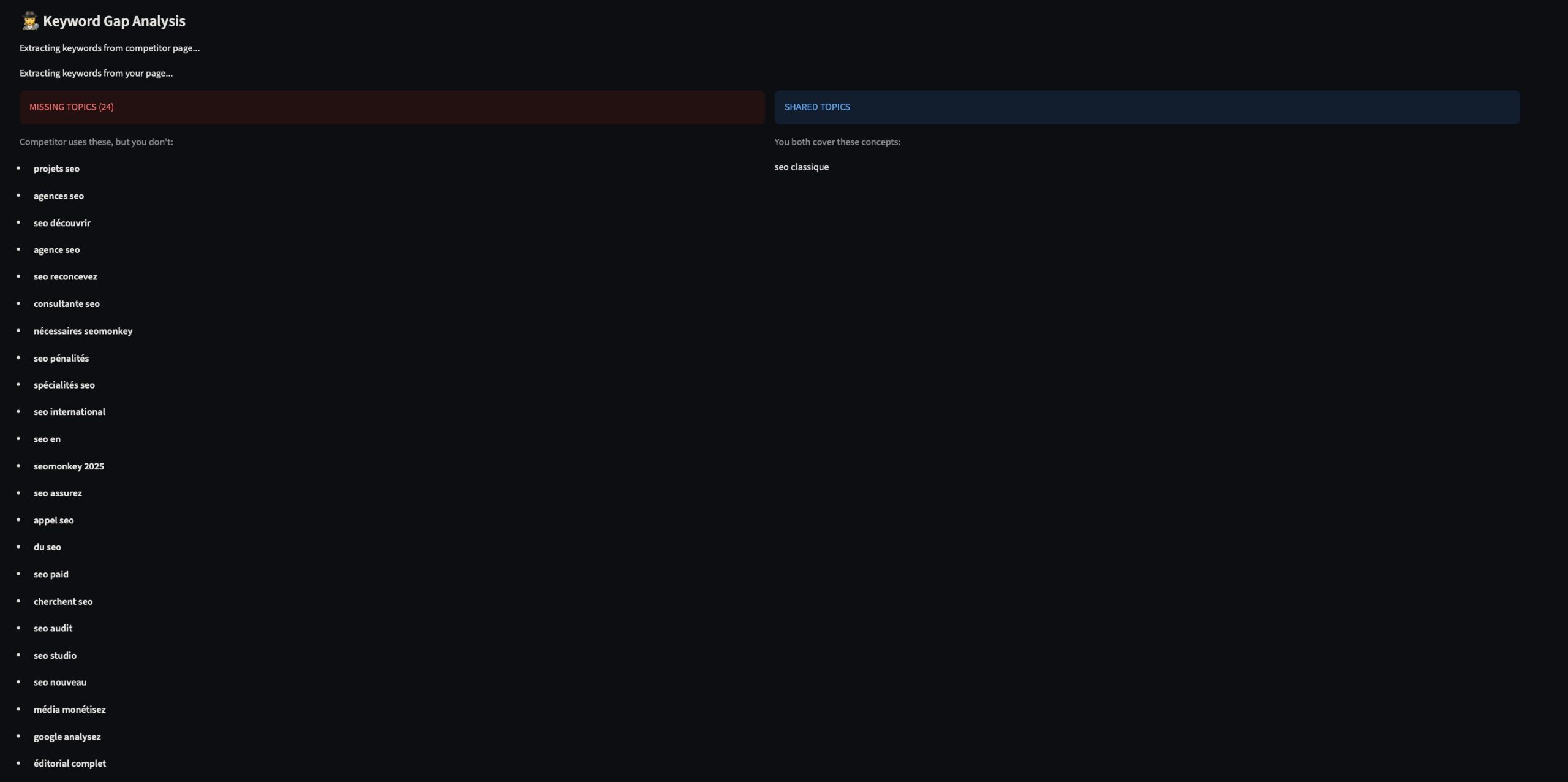

2. Gap Analysis (Missing Entities)

The auditor semantic compares your vector to that of the competitor selected.

- The output : It identifies the concepts of semantic present at the competitor, but absent in you. What are the ‘gaps’ that prevent your vector to align perfectly with the query.

Pro Tip :

Don’t try to copy. Aim for the Delta Positive.

Target Score : Between 0.65 and 0.80.

Why not 1.0 ? An identity that is perfect is suspect. The goal is to cover the field of semantics (the missing entities identified by the tool) to reduce the distance vector, while maintaining your single angle. This is what allows you to ranker on Google AND be quoted by the IAs.

Audit the semantics of your pages

Functioning of the semantic auditor

- Input Target & Benchmark : You set the keyword target and the two URLs to compare your page vs. that of the competitor.

- Fetching & Cleaning : The tool scrape URLs, parse the DOM and clean up the noise (menus, footers, scripts) to isolate only the textual content significant.

- Trace & Analysis : The engine (

all-mpnet-base-v2converts both the text and the keyword vectors to dense to understand the context of a deeper, beyond the simple words. - Scoring (Proximity Score) : Calculating the cosine similarity between the vector and the query target. You get a score of relevance gross (0 to 1).

- Gap Analysis (Missing Entities) : Via KeyBERT, the tool will extract the n-grams/entities performing the competitor’s, and filter those that are absent from your content.

- Results Actionable : You get the exact list of concepts, semantic fit, to bridge the gap vector with the leader.

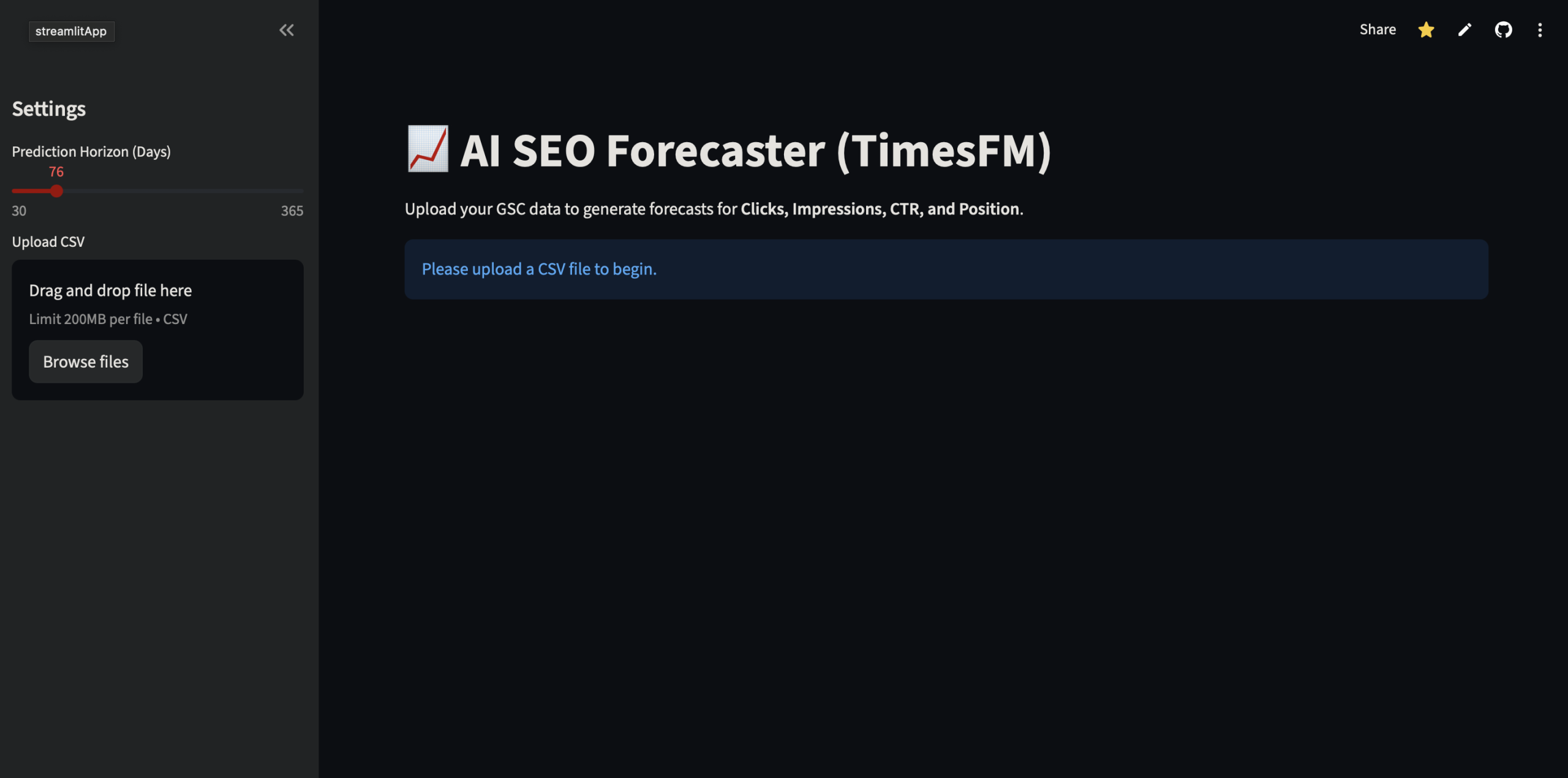

Traffic estimator tool SEO (SEO Forecaster) – Powered by Google TimeSFM

In prediction of SEO traffic, the lines of the trend and moving averages do not take into as soon as an update or a seasonality to be a little complex comes into play.

I coded this tool because I wanted to test a more robust for my forecasts Search Console. The idea is to apply the architecture to Transform the time series SEO.

The stack

The tool runs on TimeSFM (Time Series Foundation Model) of Google Research. It is a model of type ‘ decoder-only transformer trained on a corpus massive 100 billion points of data (time series).

Plan your SEO traffic with Google TimeSFM

Why is this model for the prediction of SEO traffic ?

- Capacity Zero-Shot : in Contrast to statistical models (ARIMA), which require a tuning end for each property, TimeSFM generalizes immediately to the new data. It digests your export GSC, and identifies the patterns without previous training.

- Context long : The model handles pop-up windows in extensive. It is able to ingest the entire history of the GSC (16 months) for the detection of cycles of seasonality complex or breaks a trend that the traditional methods smooth too.

- Google on Google : there is a certain logical consistency to use a model of foundation Google to predict metrics (Impressions, Clicks, CTR) from the ecosystem Google.

How it works ?

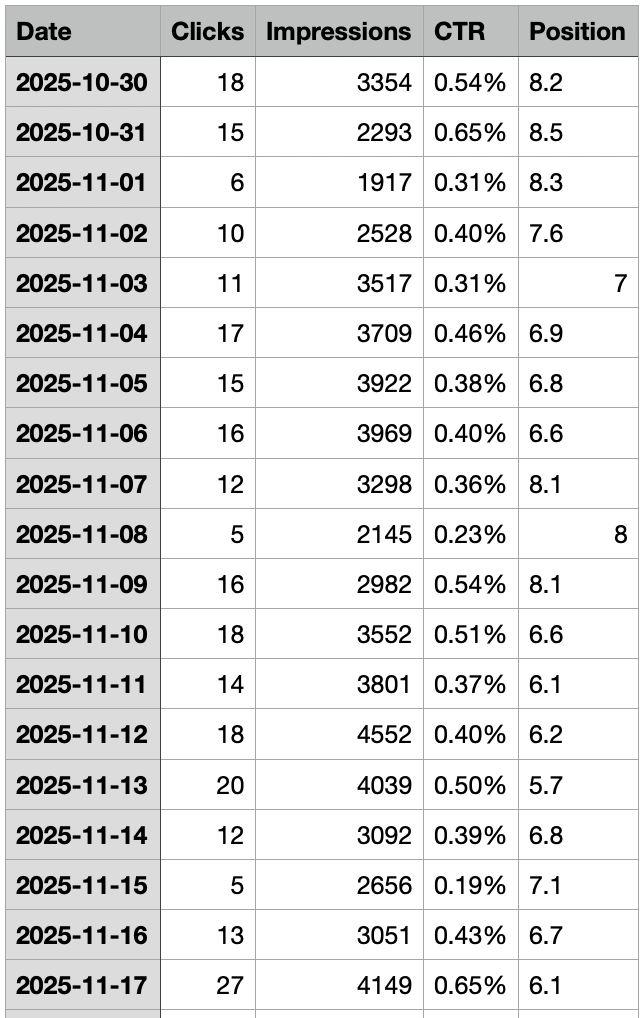

Specifically, you droppez your CSV Search Console with the columns date(very important), impressions, clicks, CTR and position. Here’s a sample of the dataset used in this example

The script sends the data to the model, and you spell the predictions (Traffic, CTR, Position) with an accuracy SOTA (State of the Art).

Side settings, in practice, there is virtually nothing to adjust : only the horizon of prediction. That is, 30 days, 3 months or a year, the rest of the model remains frozen.

The results of Google Owner avatar

timesfm

The tool provides four outputs :

- a prediction of clicks (table + chart)

- a prediction of the impressions (table + chart)

- a prediction of the CTR (table + chart)

- a prediction of the average position (table + chart)

PS : the results are of course available for download.

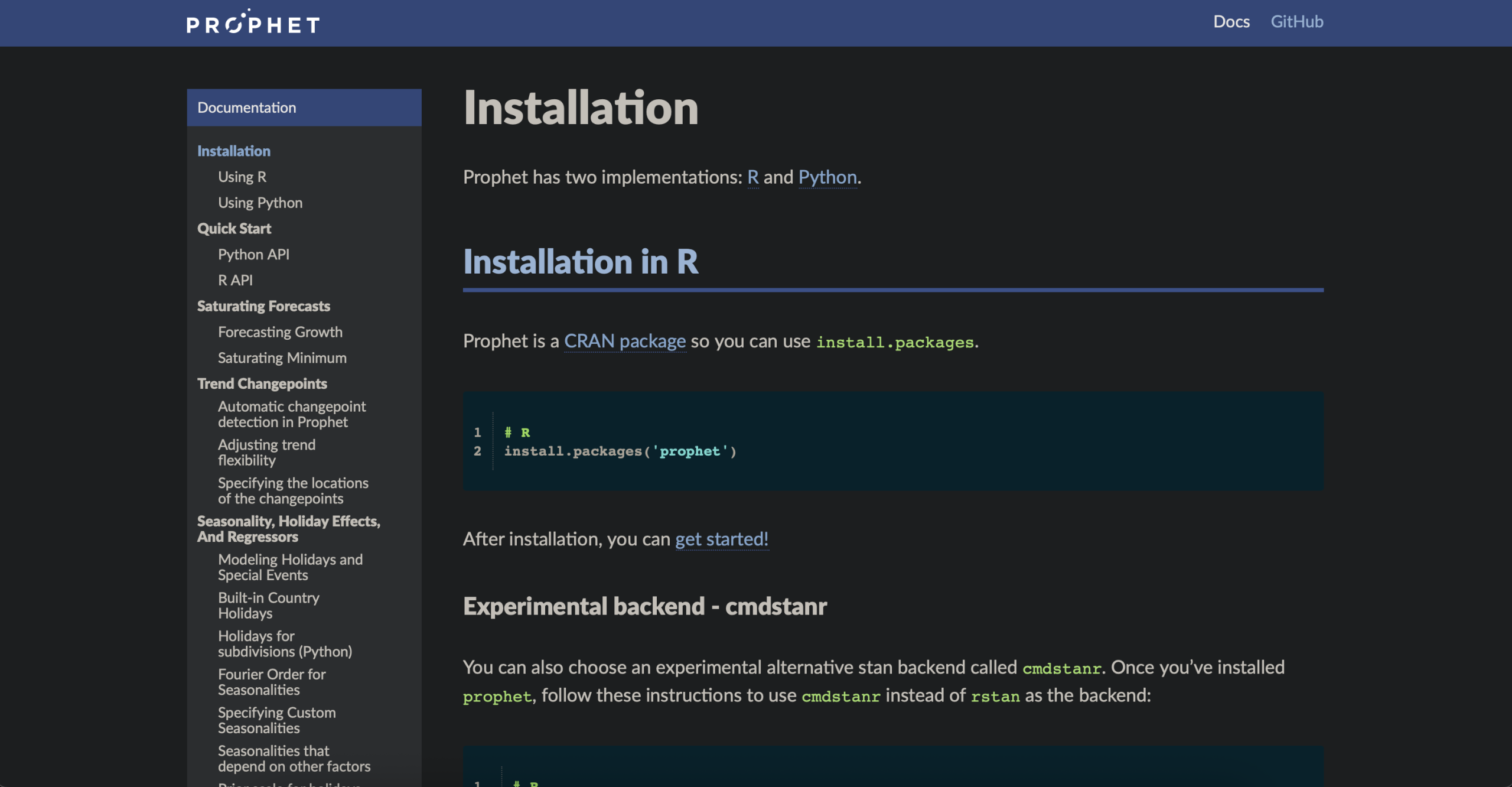

Traffic estimator Tool SEO (SEO Forecaster) – Powered by Meta Prophet

A trend is never just a straight line : it undergoes the weekends, the holidays and the events are cyclical. Try to model it manually on Excel is a waste of time, and moving averages smooth out too much reality to be exploitable.

For those who need to master these cycles, I have implemented Prophet, the forecasting model of open source developed by the team of Core Data Science Meta.

The stack

The tool runs on Prophet at Meta.

In contrast to approaches ‘Black Box’ (such as neural networks deep), Prophet, is a regression model with additive. , It is not just smooth a curve, breaks it down mathematically in three bricks intelligible : the trend (Trend), seasonality (weekly/yearly) and the calendar effects (Holidays).

Plan your SEO traffic with Meta Prophet

Why Meta Prophet for the prediction of SEO traffic ?

- Prophet uses a model of additive decomposable. This is the stat robust designed for data ‘business’ for real.

- Native management of seasonality : the model breaks down your traffic GSC into three components : Trend + Seasonality (Weekly/Yearly) + public holidays (Holidays).

- Changepoint Detection : The algorithm automatically detects breaks in trend (ex: a Core Update or migration) where a linear regression would continue foolishly its momentum.

- Robustness to outliers : It perfectly handles the ‘holes’ of data (common in the GSC), or the peaks aberrant without derail any prediction.

Meta Prophet is the perfect tool if your traffic is very cyclical (e-commerce, news, seasonal).

It integrates the effects of ‘days of week’ (down on Sunday, increasing the Monday) in its forecasts.

How it works ?

Specifically, you droppez your CSV Search Console with the columns date(very important), impressions, clicks, CTR and position. The script sends the data to the model, and you spell the predictions (Traffic, CTR, Position) with an accuracy SOTA (State of the Art).

Side settings, in practice, there is virtually nothing to adjust : only the horizon of prediction. That is, 30 days, 3 months or a year, the rest of the model remains frozen.

Results of Meta Prophet

The tool provides four outputs :

- a prediction of clicks (table + chart)

- a prediction of the impressions (table + chart)

- a prediction of the CTR (table + chart)

- a prediction of the average position (table + chart)

PS : the results are of course available for download.

PPS : the two tests have been made from the same data set.

Comparative Meta Prophet VS Google TimesFM

Meta Prophet (blue curve)

What we observe

- Explosion of values : peaks very high , and especially for negative values, the massive (up to -1000).

- Oscillations very detailed, type-sine wave non-realistic.

- Brutal Rupture just after the end of the history.

Interpretation

- Prophet on-interprets the seasonality with too little data.

- It extrapolates a structure (trend + seasonal) which does not actually exist in the history.

- The model is not forced on the field of values → he accepts clicks negative (which is economically absurd.)

Prophet went a little crazy : unstable, poorly-calibrated for a series of short and very noisy.

In the state, the forecast is not really usable, unless you spend the time (log transform, caps, priors, turn some seasonalities, etc).

Google TimesFM (red curve)

What we observe

- Values are always positive, in the same order of magnitude as the historical.

- Oscillation regular and moderate (probably weekly).

- Slight tendency soft, without sharp break.

- Continuity own between historical and forecast.

Interpretation

- TimesFM smooth properly the noise.

- It captures seasonality-short – without over-amplify.

- The model is conservative → no peaks crazy.

The forecast of Google TimesFM is clean, credible, and actionable, even if there is still a little cautious.

Verdict

- Prophet is a structural model : he needs a long history of her own and strong assumptions. Apparently 16 months of data are not enough. That said, I admit that when we play with the parameters, the rendering is often more realistic…

- TimesFM is a model of zero-shot trained on

on massive volumes.

On a small series of clicks noisy, TimesFM clearly win.